Summary

From latest code, you can now redirect the primary UI stack to a custom render target. It's no longer possible to disable primary UI completely, but you can redirect the output to your own diegetic world objects.

The below example places a fixed diegetic UI canvas into the starting room in Privateer's Hold using our friend

UserInterfaceRenderTarget. It will only display while game is paused (e.g. when UI is opened or something pops up), and only at this position in world. I trust you'll be able to take the concepts and convert to your needs in VR.

Code

Create a new C# script called

FloatingUITest.cs and paste in following code.

Code: Select all

using UnityEngine;

using UnityEngine.UI;

using DaggerfallWorkshop.Game;

using DaggerfallWorkshop.Game.UserInterface;

[RequireComponent(typeof(UserInterfaceRenderTarget))]

public class FloatingUITest : MonoBehaviour

{

UserInterfaceRenderTarget ui;

Vector2 virtualMousePos = Vector2.zero;

RawImage rawImage;

Canvas canvas;

Vector2 offscreenMouse = new Vector2(-1, -1);

private void Start()

{

// Redirect main UI stack to our custom target and disable HUD

ui = GetComponent<UserInterfaceRenderTarget>();

DaggerfallUI.Instance.CustomRenderTarget = ui;

DaggerfallUI.Instance.enableHUD = false;

// Get references

rawImage = GetComponent<RawImage>();

canvas = GetComponent<Canvas>();

}

private void Update()

{

// Do nothing unless game is paused - this can happen at any time either through user input or a quest message popping up text

// When not active, we set custom mouse position to null to release any custom position set from a prior open UI session

// Also disabling raw image when not required here - you would manage your own output canvas in VR as needed

if (!GameManager.IsGamePaused)

{

rawImage.enabled = false;

DaggerfallUI.Instance.CustomMousePosition = null;

return;

}

// Show the raw image - in VR you would bring up your diegetic output panel in front of player

rawImage.enabled = true;

// Setting mouse offscreen unless can resolve position below

virtualMousePos = offscreenMouse;

// Get rect of rawimage

Rect rect = RectTransformUtility.PixelAdjustRect(rawImage.rectTransform, canvas);

// Is screen position inside rectTransform? Here you would use your own means of firing a ray at target canvas from controller

if (RectTransformUtility.RectangleContainsScreenPoint(rawImage.rectTransform, Input.mousePosition, GameManager.Instance.MainCamera))

{

// Get local point inside canvas

Vector2 localPoint;

if (RectTransformUtility.ScreenPointToLocalPointInRectangle(rawImage.rectTransform, Input.mousePosition, GameManager.Instance.MainCamera, out localPoint))

{

// Convert to UV coordinates inside tranform area using rect - v is raised into the 0-1 domain and inverted for 0 to be top-left

float u = localPoint.x / rect.width + 0.5f;

float v = 1.0f - (localPoint.y / rect.height + 0.5f);

// We know size of render target so we can convert this into x, y coordinates

float x = u * ui.TargetSize.x;

float y = v * ui.TargetSize.y;

// Set virtual mouse position into UI system

virtualMousePos = new Vector2(x, y);

}

}

// Feed custom mouse position into UI system

DaggerfallUI.Instance.CustomMousePosition = virtualMousePos;

}

}

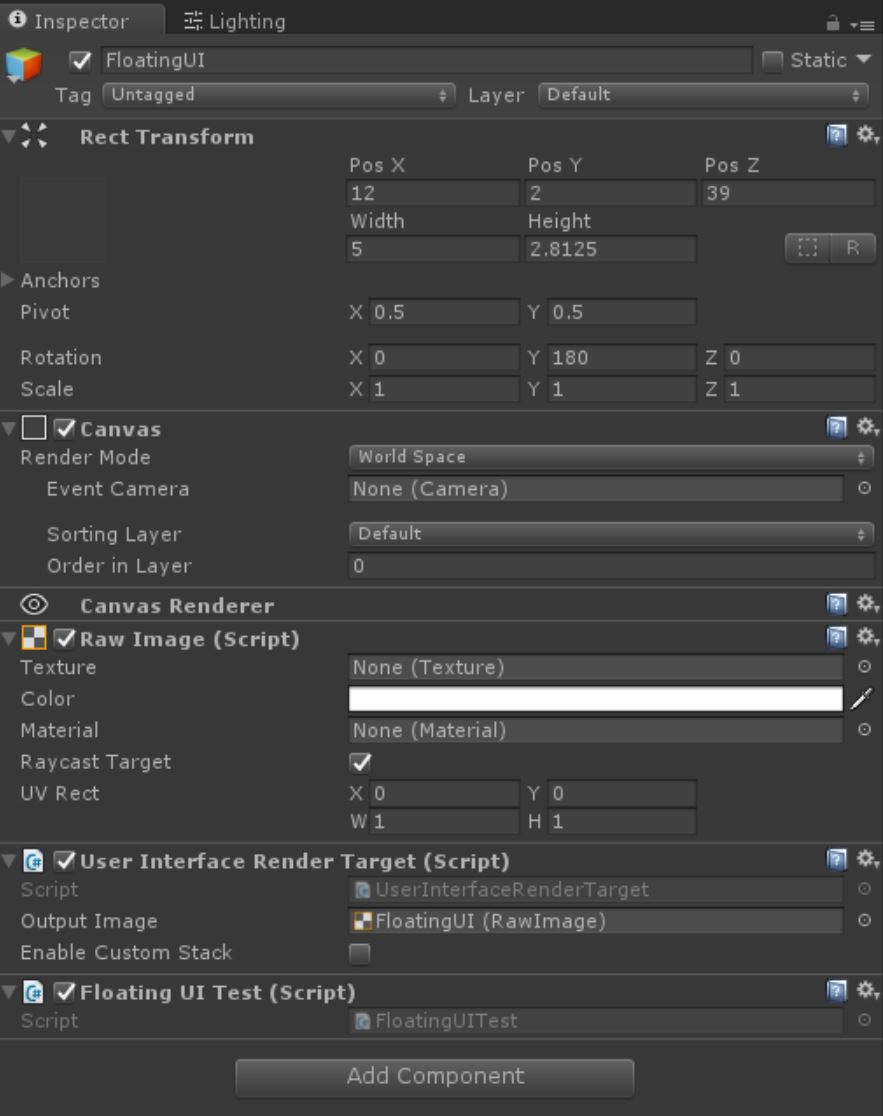

Now create a GameObject in root of scene called

FloatingUI and configure its Inspector exactly as below with all components and their settings. This will position it to starting cave in PH facing player when you start a game or load a save in this room.

When you hit play, face world object and open UI windows as normal. They will be redirected to the custom target along with mouse input.

- floatingui-inspector.JPG (88.33 KiB) Viewed 4485 times

Improvements

Something else we need to do is allow you to set your own scroll events and click input. But if you can get things started, I'll be able to expose these events so you can inject scrolls and clicks also.

Example

In my example the mouse is standing in for the "laser pointer" from a Vive controller. Everything is happening in world space however.